Second Lt. Madeline Schmitz, a student pilot, prepares to “take off” in the T-6 Texan II flight simulator at Columbus AFB, Miss. Photo: A1C Keith Holcomb

The Air Force is aggressively seeking new technologies that will be affordable and can be rapidly fielded to help the force become more lethal. When it comes to training, artificial intelligence and virtual reality are keys to making this a certainty. Lt. Gen. Steven L. Kwast, head of Air Education and Training Command, said the service is in the “nascent” stages of adopting virtual and augmented reality. USAF is still trying to understand exactly what impact this technology has on the human brain, how it can be utilized to make the force more effective, and how much it costs, he told reporters at AFA’s Air Warfare Symposium in Orlando, Fla.

Researchers have already discovered that humans learn visceral things, such as how to get in a cockpit and start up a jet, “pretty quickly,” said Kwast. It’s when they have to fuse various pieces of information together in a complex environment—as in a combat scenario where another human or machine is trying to kill them—that more training is required.

Student researchers attending USAF’s Air Command and Staff College recently conducted an adaptive flight training study at Columbus AFB, Miss., to help determine how a virtual environment can help adults learn. The students stood up three test groups, which included a range of experience levels, and asked them to fly a T-6 Texan II simulator without any prior T-6 flying time.

Lt. Gen. Steven Kwast speaks at the Developing Innovators panel at AFA’s Air Warfare Symposium on Feb. 22. Kwast says USAF is in the “nascent” stages of adopting virtual and augmented reality technologies for use in training. Staff photo by Mike Tsukamoto

Each test group flew multiple simulated missions in a virtual environment before taking a ride in the T-6 simulator. The targeted learning system measured a person’s performance and ability to learn in a virtual environment, according to a USAF news release. The study is one of many the Air Force is using to determine how such technology can be implemented into its training curriculum.

“When we find the data that really tells us what makes us good at this, then we will know how much virtual and augmented reality might play in future techniques for not only teaching people how to be good in this business, but accentuating their effectiveness at doing the job,” Kwast said at AWS18.

Virtual and augmented reality are “fundamentally different” than they were just a few years ago, he said.

_Read this story in our digital issue:

As technology continues to advance, Kwast says he can see a time when the simulator for the new pilot trainer, now known as T-X, transitions from the bulky piece of equipment it is today, anchored in place, to a virtual environment that allows airmen to take it back to their dorm rooms for more practice if desired. The service isn’t there yet.

But Brig. Gen. James R. Sears, AETC’s operations director, said the Air Force isn’t waiting for the T-X to come online to start implementing this new technology.

“The continuum of learning and force development that we’re instituting is going to allow us to bring the tinkering into the system as it allows us to train better, faster, and cheaper for what we need,” Sears told reporters.

Second Lt. Kenneth Soyars takes off during a virtual reality flight simulation at Columbus AFB, Miss. Photo: A1C Keith Holcomb

For example, using virtual and augmented reality, the Air Force can teach a maintainer how to change the tire on an aircraft even when the aircraft is not physically present. An artificial intelligence coach can show the airman “where the bolt is and how many pounds of torque you need on that,” noted Kwast.

The Air Force awarded Massachusetts-based technology company Charles River Analytics a contract in August 2017 to build a deployable F-15E Strike Eagle game-based virtual environment trainer. The company is collaborating with the 365th Training Squadron at Sheppard AFB, Texas, and Sheppard’s Instructional Technology Unit on the project, which also is sponsored by the Air Force Research Laboratory. The virtual training system is slated for delivery in “late-spring 2019,” according to the Air Force.

“If we could have a virtual trainer, where you have your students all doing the operational check at the same time, under the supervision of an instructor in the virtual world, monitoring what’s going on, you can get a lot of work done really quick,” said Steven Canham, an F-15 avionics instructor at the 365th TRS, in the release. “It doesn’t necessarily mean that we can revert to solely teaching without a real aircraft, because you still have to understand that these students need to have dexterity and coordination and things like that.”

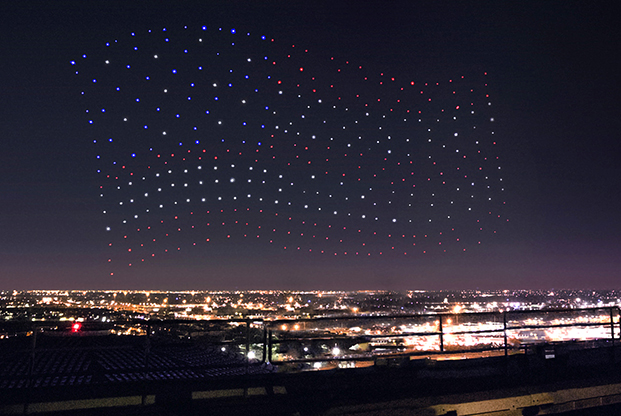

Air Force Special Operations Command is also at the “leading edge” of virtual reality and autonomous learning. AFSOC Commander Lt. Gen. Brad Webb said he was inspired by Lady Gaga’s 2017 Super Bowl halftime performance, in which she worked with Intel to create a spectacular light show using 300 quadcopters.

“That really is eye-opening,” Webb told reporters at AWS18. “Imagine the potential of this—that can be applied—if we did some automated learning that could provide sensor inputs, etc. That’s the kind of advancements I want to look at.”

An F-15E—imaged in 3-D—by Charles River Analytics for the Air Force. The Cambridge, Mass., company is working with AETC to ensure the virtual trainer meets requirements to efficiently train aircraft maintainers. Graphic: CRA/USAF

The drones used algorithms to automate “the animation creation process by using a reference image, quickly calculating the number of drones needed, determining where drones should be placed, and formulating the fastest path to create the image in the sky,” according to geekwire.com.

Webb added that artificial intelligence “holds great promise,” and said AFSOC already has done a series of tests, and it plans to do more.

Advancing technology is paying new dividends for the command. CMSgt. Gregory A. Smith, AFSOC’s command chief, said it used to take “14 flights to qualify a back-ender to do airdrops” from legacy MC-130s, but with the new J-model, it only takes two or three flights. “Everything else is done through the simulator or virtual reality,” he said.

The command also uses virtual reality goggles to place its CV-22 tail flight engineers and special mission aviators anywhere in the world, including hostile environments. The algorithms track the air commando’s decision-making and reaction to stressful situations. Artificial intelligence then takes that information and adjusts the scenarios.

Intel Shooting Star drones lit up the sky during halftime of the 2017 Super Bowl. An algorithm directed the drone swarm to create pictures such as this American Flag. Photo: Intel Corp

“As you tie it into assessment and selection and the ability to rapidly make decisions in complex austere environments that’s where we’re really starting to learn,” said Smith. After practicing in the virtual environment, “we’re seeing significant [improvements] in their reaction time in the real environment,” he added.

Smith called the training scenarios “unwinnable situations,” saying the command has noticed “the computers will beat humans 99 percent of the time,” he said. It’s something the younger generation is responding to well. His generation grew up playing Atari video games, but new recruits coming into the service today are used to highly realistic video games, such as Call of Duty, so it makes sense to use that technology for training.

“Ultimately, civilizations that thrive and survive are civilizations that learn faster and with more humility, meaning they are willing to change in order to do things better,” Kwast said. “That’s the journey we’re on.”